|

6/26/2023 0 Comments Robo 3t search commands

For this, we used the profile level locally to capture ALL queries made after a certain endpoint call was called. Identify and remove duplicate queries made at different levels of our API for certain API calls.This helped us optimize our network transfer from about 250 MB/s to 150 MB/s. We had a few queries with responses of even a couple of MBs, so it was important (and easy) to update our UI to load the data in a more agile way when needed. Find queries that are data-transfer heavy, as each record also contains the response's number of items and size.We have a caching system based on Redis, where we store responses for frequently-run queries for a couple of seconds in order to decrease the number of read operations. Identify frequently run queries as candidates for caching.For example, here I aggregate the number of queries per collection and filter: Now, with recent commands stored in the db.system.profile collection, you can easily analyze them with MongoDB queries and additional JavaScript code. See its documentation for more information. The level 1 setting captures slow queries only. Note that db.setProfilingLevel() has many options to let you configure what kind of queries you want to capture.

Wait a bit, then disable the profiling again with db.setProfilingLevel(0).Profiler starts capturing running commands into the collection db.system.profile.Connect to your MongoDB instance with the client of your choice and call db.setProfilingLevel(2).If the profiling level is enabled, the profiler captures and records data on the performance of write operations, cursors, and database commands on a running mongod instance. This works great when combined with proactive alerting on badly performing queries and also when optimizing a locally running application. This section will describe the tools and methods we used to debug slow-running queries in real time. Number of indexes to decrease the utilization when writing.How often certain queries are run to decrease utilization when reading.We did some experiments that confirmed the correlation between the white-page problem and over-utilized MongoDB drive, then started working on optimization. These queries would take the longest when MongoDB drive utilization was at its peak. We found out that certain database queries make the Meteor backend unresponsive until the query is resolved. Our main application is built using the Meteor framework. Internally, this became known as the white-page problem, as after refreshing the browser, users got stuck on a white page.ĭuring this incident, not all the application pods were affected at the same time, so we started to dig into our framework first. It all started with users reporting that our UI sometimes freezes for a couple of seconds or even minutes.

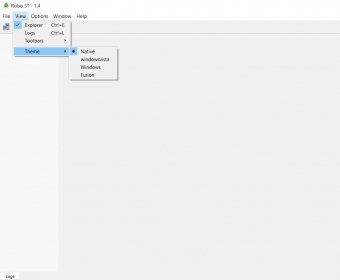

Throughout the article, we will be using the Robo 3T MongoDB client and tooling provided by MongoDB Atlas, which is our MongoDB provider.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed